Introducing OpenStack

Only a few decades ago, many large computer hardware companies had their own fabrication

facilities and maintained competitive advantage by making specialty processors, but as

costs rose, fewer companies produced the volume of chips needed to remain profitable.

Merchant chip fabricators emerged, able to produce general-purpose processors at scale,

and drove costs down significantly. Having just a few computer chip manufacturers

encouraged standardized desktop and server platforms around the Intel x86 instruction

set, and eventually led to commodity hardware in the client-server market.

The rapid growth of the World Wide Web during the dot-com years of the early2000s created

huge data centers filled with this commodity hardware, but although the commodity hardware

was powerful and inexpensive, its architecture was often like that found in desktop computing,

which was not designed with centralized management in mind. No tools existed to manage

hardware as a collection of resources. To make matters worse, servers during this period

generally lacked hardware management capabilities (secondary management cards), just like

their desktop cousins. Unlike mainframes and large symmetric multiprocessing (SMP) machines,

these commodity servers, like desktops, required layers of management software to coordinate

independent resources.

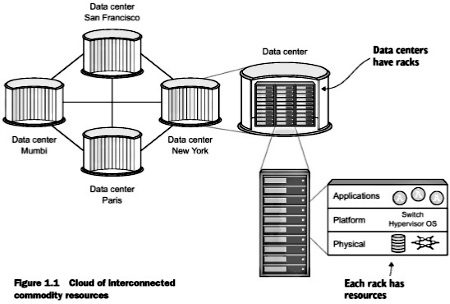

During this period, many management frameworks were developed internally by both public and

private organizations to manage commodity resources. Figure 1_1 shows collections of

interconnected resources spread across several data centers. With management frameworks,

these common resources could be used interchangeably, based on availability or user requirements.

While it's unclear exactly who coined the term, those able to harness the power of commodity

computing through management frameworks would say they had a "cloud" of resources.

Out of many commercial and open source management packages to be developed during this

period, the OpenStack project was one of the most popular. OpenStack provides a common

platform for controlling clouds of servers, storage, network, and even application resources.

OpenStack is managed through a web-based interface, a command-line interface (CLI), and

an application programming interface (API). Not only does this platform control resources,

it does so without requiring you to choose a specific hardware or software vendor. Vendor-specific

components can be replaced with minimum effort. OpenStack provides value for a wide range

of people in IT organizations.

One way to think about OpenStack is in the context of the Amazon buying experience. Users

log in to Amazon and purchase products, and products are delivered. Behind the scenes, an

orchestra of highly optimized steps are taken to get products to your door as quickly and

inexpensively as possible. Twelve years after Amazon was founded, Amazon Web Services (AWS)

was launched. AWS brought the Amazon experience to computing resource delivery. A server

request that might take weeks from a local IT department could be fulfilled with a credit

card and a few mouse clicks with AWS. OpenStack aims to provide the same level of orchestrated

efficiency demonstrated by Amazon and other service providers to your organization.

• For cloud/system/storage/network administrators - OpenStack controls many types of

commercial and open source hardware and software, providing a cloud management layer on

top of vendor-specific resources. Repetitive manual tasks like disk and network provisioning

are automated with the OpenStack framework.

• For the developer - OpenStack is a platform that can be used not only as an Amazon-like

service for procuring resources (virtual machines, storage, and so on) used in development

environments, but also as a cloud orchestration platform for deploying extensible applications

based on application templates. Imagine the ability to describe the infrastructure (X servers

with Y RAM) and software dependencies (MySQL, Apache2, and so on) of your application, and

having the OpenStack framework deploy those resources for you.

|