Standard Network Path Metrics

By Shaun Hummel

Selecting performance metrics are key to an effective performance monitoring

strategy. Before assigning performance metrics, we need to understand the

typical industry standard performance metrics used. The performance metrics

are used to detect performance problems and to determine causes. The following

describes the most common network performance metrics.

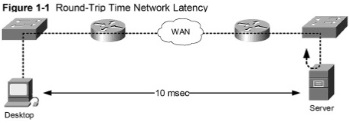

Network Latency

The latency of a network is a general term that refers to the amount of time

(delay) a packet spends on the wire from the time a client makes a request

minus the server and desktop processing time. Latency is most often expressed

as round-trip time (RTT) between source to destination. The ping program is

used to verify interface status and RTT latency as shown with Figure 1-1. The

latency measured is travel time from where the ping was issued and return time

from the destination (server). The network latency is a cumulative metric based

on all delays across the network to the server. The application response time is

derived from network latency along the path and server processing delay.

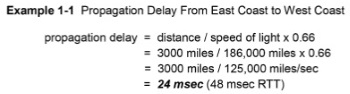

Propagation Delay

Network latency is the cumulative delay caused by fixed and variable delays.

The fixed delays include propagation, device processing and serialization delay.

Propagation delay is the delay resulting from the total distance between routers

on the network. It is based on the speed of light (186,000 miles per second) and

the distance between locations. The propagation of a signal through copper and

fiber media is approximately two thirds the speed of light. The distance

between source and destination is divided by two thirds the speed of light. This

is estimated to be an average of 6 microseconds per km or 10 microseconds

per mile. The increased number of router hops resulting from suboptimal routing

and network design problems contribute to increased propagation delay.

Device Processing Delay

Device processing delay is the amount of time to process packets at each

network device between source and destination. The packets arrive where they

are processed and then forwarded toward the destination. The standard

network devices include routers, switches, wireless devices, load balancers

and WAN optimizers. The ratings throughput for network devices is based on

processor, memory and platform architecture. The primary solutions to minimize

device processing delay is hardware upgrades to increase device capacity.

Serialization Delay

Serialization delay is the delay that occurs when placing bits on the network

media (wired/wireless). It is determined by the packet size and the link speed. The

serialization delay is lower with faster campus links and higher with slower WAN

links. The amount of serialization delay on low speed circuits is compounded

when there are multiple router hops.

Queuing Delay

Queuing delay is a variable delay caused by data packets waiting in a device

queue for servicing. The amount of queuing delay will vary based on network

congestion, packet size and how busy the ingress and egress queues are when

the packet arrives. The amount of queuing is minimized with faster link

speed between network devices.

Jitter

Jitter is a variable delay that applies to voice and video traffic only. Jitter

results from voice packets waiting in a queue for variable amounts of time for access

to the network media. Jitter is the amount of variation (msec) in delay between

packets caused by queuing. The voice and video traffic classes require constant

delay for proper service quality. The recommendation is to use de-jitter buffers

at the receiving node to reduce jitter. The de-jitter buffer size should be set for a

constant delay affect and according to traffic load. Setting buffer size too low

causes packet loss and setting them high causes excessive delays.

|