An array is a simple data structure in which each element can be accessed directly. For example main memory is constructed as an array. If the data item being stored is larger than one byte, then multiple bytes can be allocated to the item, and the item is addressed as item number x item size. But what about storing an item whose size may vary? And what about removing an item if the relative positions of the remaining items must be preserved? In such situations, arrays give way to other data structures.

Linked Lists

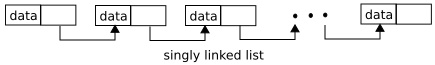

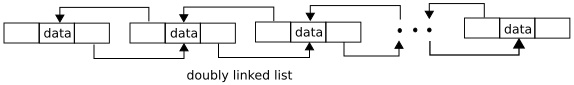

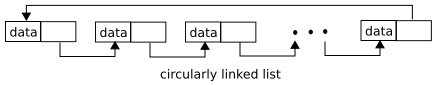

After arrays, lists are perhaps the most fundamental data structures in computer science. Whereas each item in an array can be accessed directly, the items in a list must be accessed in a particular order. That is, a list represents a collection of data values as a sequence. The most common method for implementing this structure is a linked list, in which items are linked to one another. Linked lists are of several types:

In a singly linked list, each item points to its successor, as illustrated above.

In a doubly linked list, a given item can refer either to its predecessor or to its successor, as illustrated above.

In a circularly linked list, the last element in thee list refers to the first element, rather than to null, as illustrated above.

Linked lists accommodate items sizes and allow easy insertion and deletion of items. One potential disadvantage of using a list is that performance for retrieving a specified item in a list of size n is linear - 0n, as it requires potentially traversing all n elements in the worst case. Lists are sometimes used directly by kernel algorithms. Frequently, though, they are used for constructing more powerful data structures, such as stacks and queues.

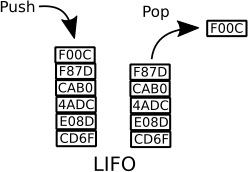

Stack

A stack is a sequentially ordered data structure that uses the last in, first out(LIFO) principle for adding and removing items, meaning that the last item placed onto a stack is the first item removed. The operation for inserting and removing items from a stack are known as push and pop, irrespectively. An operating system often uses a stack when invoking function calls. Parameters, local variables, and the return addresses are pushed onto the stack. When a function is called; returning from the function call pops those items off the stack.

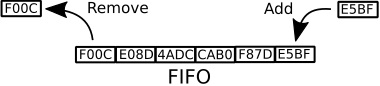

Queue

A queue, in contrast is a sequentially ordered data structure that uses the first in, first out (FIFO) principle: items are removed from a queue in the order in which they are inserted. There are many everyday examples of queues, including shoppers waiting in a checkout line at a store and cars waiting in line at a traffic signal. Ques are also quit common in operating systems - jobs that are sent to a printer are typically printed in the order in which they were submitted, for example. Tasks that are waiting to be run on an available CPU are often organized in queues.

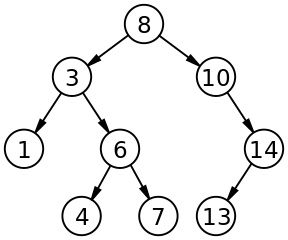

Tree

A tree is a data structure that can be used to represent data hierarchically. Data values in a tree structure are linked through parent - child relationships. In a general tree, a parent may have unlimited number of children. In a binary tree, a parent may have at most two children, which we term the left child and the right child. A binary search tree additionally requires an ordering between the parent's two children in which left_child <= right_child.

The picture above provides an example of a binary search tree.

When we search for an item in a binary search tree, the worst-case performance is 0(n) (consider how this can occur). To remedy this situation, we can use an algorithm to create a balanced binary search tree. Here a tress containing n items has at most 1g n levels, thus ensuring worst-case performance of 0(1g n).

About the Authors

Abraham Silberschatz is the Sidney J. Weinberg Professor of Computer Science at Yale University. Prior to joining Yale, he was the Vice President of the Information Sciences Research Center at Bell Laboratories. Prior to that, he held a chaired professorship in the Department of Computer Sciences at the University of Texas at Austin.

Professor Silberschatz is a Fellow of the Association of Computing Machinery (ACM), a Fellow of Institute of Electrical and Electronic Engineers (IEEE), a Fellow of the American Association for the Advancement of Science (AAAS), and a member of the Connecticut Academy of Science and Engineering.

Greg Gagne is chair of the Computer Science department at Westminster College in Salt Lake City where he has been teaching since 1990. In addition to teaching operating systems, he also teaches computer networks, parallel programming, and software engineering.

Operating System Concepts, now in its ninth edition, continues to provide a solid theoretical foundation for understanding operating systems. The ninth edition has been thoroughly updated to include contemporary examples of how operating systems function. The text includes content to bridge the gap between concepts and actual implementations. End-of-chapter problems, exercises, review questions, and programming exercises help to further reinforce important concepts. A new Virtual Machine provides interactive exercises to help engage students with the material.

Reader Adam Sinclair says, "I'm writing this review from the perspective of a student. I am finishing an Operating Systems course at university and I have to say this book is fantastic at introducing new concepts. If there is ever a conversation about OS, I always refer to this book. The content is very well laid out and organized in a way that can be read from beginning to end. There is no need to jump from one chapter to another (unless you want to skip sections)."

Reader Chetan Sharma says, "This book is bible for operating system knowledge. It covers very important concepts of Process Management and Memory Management. This book is good for all type of readers - Beginner, Intermediate and Advanced reader. Highly recommended for Students/Professionals/Readers who want to enhance their knowledge.

More Computer Architecture Articles:

• Intel's Sandy Bridge Micro-Architecture

• Real-Time CPU Scheduling

• CPU Chip Packaging

• Digital to Analog Convertion with a Microcontroller

• Oscilloscope Required for Serious Digital Electronics Work

• Operating System Memory Management

• Priority CPU Scheduling Algorithm

• Getting started with Raspberry Pi

• Factors in Choosing an Oscilloscope

• AMD Sempron Processor